The University of Illinois Urbana-Champaign is leading a new research initiative to make voice recognition technology more useful for people with diverse speech patterns and disabilities. The Speech Accessibility Project launched this fall with cross-industry support from Amazon, Apple, Google, Meta, and Microsoft, as well as nonprofit organizations whose communities will benefit from this accessibility initiative.

While the project is happening at the Beckman Institute for Advanced Science and Technology, the Department of Linguistics is also playing an important role. Linguistics professor Heejin Kim is on the research team, and the project is being led by Mark Hasegawa-Johnson, a professor of electrical and computer engineering and affiliate professor of linguistics.

“The option to communicate and operate devices with speech is crucial for anyone interacting with technology or the digital economy today,” said Hasegawa-Johnson. “Speech interfaces should be available to everybody, and that includes people with disabilities. This task has been difficult because it requires a lot of infrastructure, ideally the kind that can be supported by leading technology companies, so we’ve created a uniquely interdisciplinary team with expertise in linguistics, speech, AI, security, and privacy to help us meet this important challenge.”

Today’s speech recognition systems, such as voice assistants and translation tools, don’t always recognize people with a diversity of speech patterns often associated with disabilities. This includes speech affected by Lou Gehrig’s disease or Amyotrophic Lateral Sclerosis, Parkinson’s disease, cerebral palsy, and Down syndrome.

“Millions of people in our society are affected by diseases that impact their motor capability to produce speech,” said Kim. “Parkinson’s disease alone affects nearly one million Americans, with about 60,000 being newly diagnosed every year. By creating speech data that is representative of such populations and by supporting new speech technology development, we ultimately hope to improve the quality of life for people with disabilities.”

With artificial intelligence and machine learning, technology companies can address the need for more inclusive speech recognition. To support this goal, the Speech Accessibility Project will collect speech samples from individuals representing a diversity of speech patterns. UIUC researchers will recruit paid volunteers to contribute recorded voice samples and will create a private, de-identified dataset which can be used to train machine learning models to better understand a wide variety of speech patterns. The Speech Accessibility Project will focus first on American English.

Kim said the project’s roots can be traced back to 2008, when she and Hasegawa-Johnson developed the Universal Access (UA) Speech corpus consisting of speech samples from people with cerebral palsy and made it available to researchers world-wide. Since then, it has been used in hundreds of research papers, and speech recognition error rates have been reduced by a factor of three since its release.

“While we were happy with the impact of the UA Speech corpus on the field, we knew we should scale up the size and scope of the speech data so that we could create speech technologies that actually work in real life for people with various speech disorders,” said Kim. “We are excited that the opportunity to do so is finally happening. In the Speech Accessibility Project, I will be directing speech material selection procedures and text annotation tasks.”

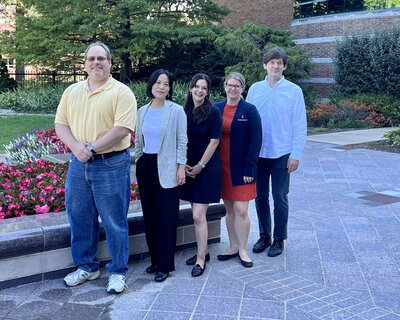

In addition to Kim and Hasegawa-Johnson, the Speech Accessibility Project includes Clarion Mendes, a clinical professor in speech and hearing science and a speech-language pathologist. The team is also comprised of several staff members from the Beckman Institute, including information technology professionals who will build a secure repository for the de-identified speech samples.

Community-based organizations Davis Phinney Foundation and Team Gleason have already pledged support for the project.

“The Davis Phinney Foundation’s mission is to help people with Parkinson’s live well today,” said the foundation’s executive director Polly Dawkins. “Part of that commitment includes ensuring people with Parkinson’s have access to the tools, technologies, and resources needed to live their best lives. We are thrilled to partner with this team to ensure that this effort can benefit our community.”

Team Gleason, which serves the ALS community through a broad range of programming, assistive technology, equipment, and robust support services, shares a similar goal of expanding the usefulness of speech recognition tools.

“Team Gleason strives each day to provide the best available assistive technology for the ALS community while simultaneously exploring ways to advance future solutions,” said Blair Casey, executive director for Team Gleason. “Technology has the ability to overcome communication barriers and increase independence. Team Gleason is proud to help accelerate this effort for people living with ALS and anyone else with speech differences.”

Community organizations will assist in participant recruitment and user testing, as well as provide feedback at various stages of the project. Kim said none of this would be possible without the widespread support this project has received so far.

“Having a strong team with such a wide array of support is essential for this project’s success,” said Kim. “For instance, it would be too difficult for just one company to develop speech technologies that are as inclusive as we believe they should be, so having multiple tech companies work together with us makes our goal attainable. These companies, meanwhile, chose the U of I because of our strong tradition and skill set in accessibility. In addition, having community-based organizations involved is vital, since we want every aspect of the speech data collection and technology development to be tailored to the needs of people who will use the final product.”

Dania De La Hoya Rojas, Beckman Institute for Advanced Science and Technology

Editor's note: A version of this story first appeared on the Speech Accessibility Project website. If you’re interested in learning more about the project, you can find more information here.